Note: All images on this page are thumbnails. By clicking on the image itself, the image will be expanded.

In 2002, the company for whom I worked was subcontracted to support the US Navy Precision Approach Radar Trainer (15G33). The trainer was built in the early 1980's and was cutting edge at the time of its design. Unfortunately, time has a way of working against trainers and the 15G33 was coming to the end of its service life. The Texas Instruments graphics card, the International Telephone and Telegraph (ITT) voice recognition card, and the Seagate hard drive were no longer being manufactured. Cannibalism was the only way to keep the trainers running.

The trainer was considered by the sailors who trained on

it as difficult to use and a poor imitation of the real

equipment used in air traffic controller facilities. The

trainer really just looked like a computer with a monitor,

track ball, keyboard, and a headset. To sailors in 2002,

the trainer's CPU speed was truly unacceptable. Worse,

trainees were required to perform voice recognition

training, a process whereby the sailor had to sit at the

trainer and repeat phrases generated by the trainer. The

voice recognition training sessions could last hours and,

if the sailor came down with a cold, the results of the

earlier voice training would become unusable. Because of

its deficiencies, the trainer was seldom used. It sat

almost wholly neglected in naval air station towers.

Records showing trainer use (mandated by Navy

instructions) were

gun decked

.

.

The in-service engineer asked me what could be done to continue the life of the trainer. I recommended reengineering the trainer using Commercial Off The Shelf (COTS) components. The reengineered trainer would repair some serious design flaws that got in the way of sailors using the trainer. I suggested that we build a prototype to see if the Navy would agree to fund rebuilding the trainer.

I wrote a very simple prototype that allowed a target to be maneuvered using voice control. Its purpose was to aid in the selection of voice recognition software. Originally, I hoped to use the built-in Speech API (SAPI) provided by Microsoft in the Windows operating system. Unfortunately, the Microsoft SAPI performed so badly that I was forced to search out another SAPI. I decided on the IBM ViaVoice voice recognition software. Along with its ease of integration, its distributor, Wizzard Software, provided strong technical assistance in ViaVoice's intricacies. The advantage with ViaVoice was that no voice recognition training was required. The voice controlled prototype's target was easily maneuvered.

ViaVoice worked very well in recognizing voice. But its Text to Speech (TTS) capabilities were not satisfactory. I was forced to search out another TTS package. I determined that the AT&T Natural Voices TTS product exceeded my needs. Again, Wizzard Software provided technical assistance when the AT&T support staff was incapable.

I integrated pilot response into the prototype (now becoming more complex than originally conceived) so that when a command was given, the prototype would respond appropriately. I now had a prototype that accepted voice commands, moved the target on the monitor, and acknowledged the command audibly. It was an interesting exercise to demonstrate the viability of the concept. Having proved the concept to the in-service engineer, the next step was to provide a more complex prototype that could be tested and hopefully approved by the US Navy and Marine Corps air traffic controllers.

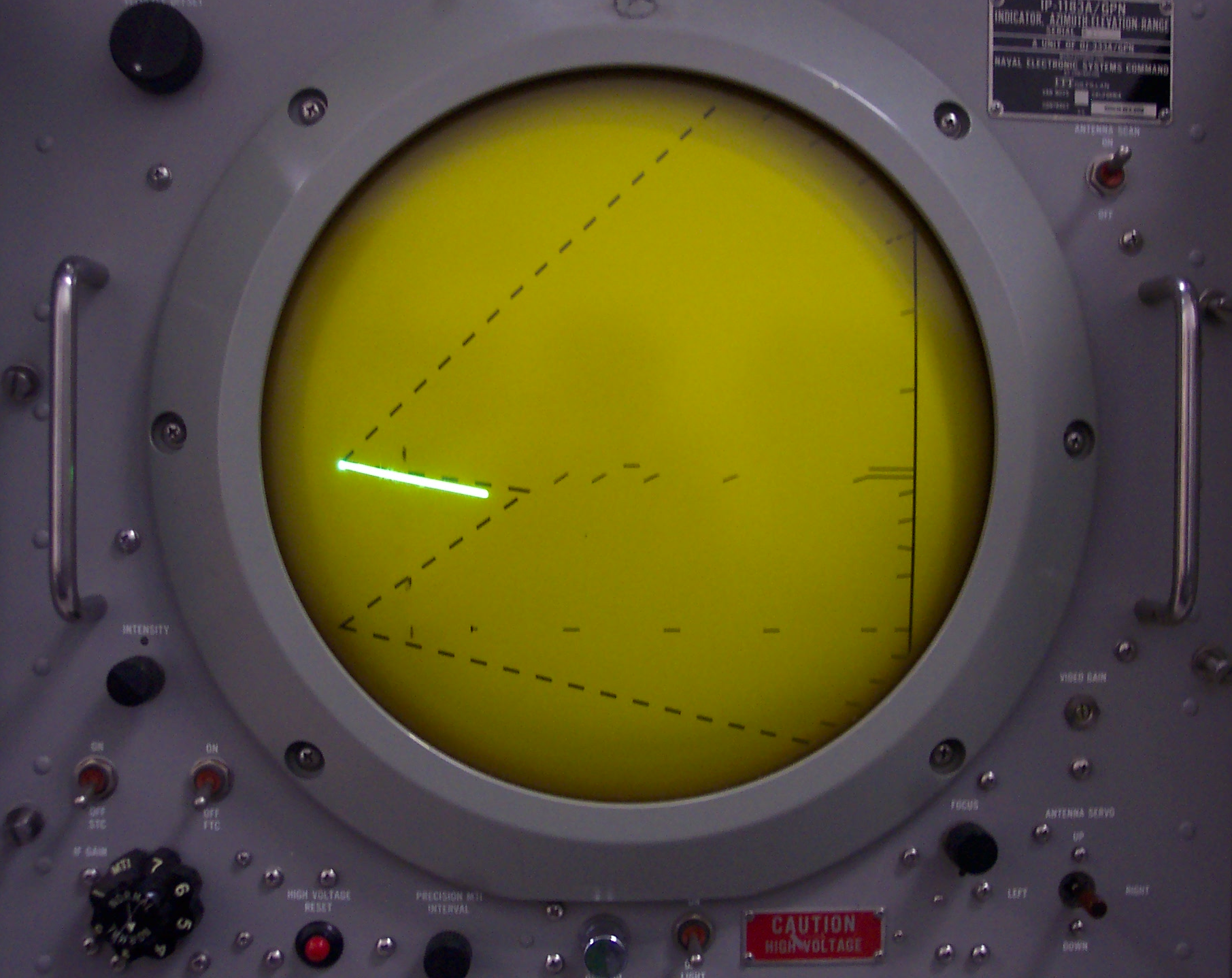

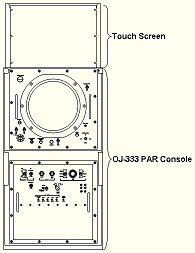

A major stumbling block was the packaging of the existing trainer. There was no question in my mind that the trainer had to look like the OJ-333 Precision Approach Radar (PAR) radar console, used at US Navy airfields world-wide. An OJ-333 PAR console had become available on the west coast. One of the project team members had served as a US Navy ATC and she was able to convince the powers that be to give the console to the project. When it arrived, it was stripped of all components except those that were used by the controller. The project's very competent hardware engineer installed a monitor in place of the radar scope and a computer in place of the guts of the console. We now had a trainer console with the look and feel of the OJ-333.

But US Navy air traffic controllers interact with more than just the OJ-333 PAR console. They also needed to interact with:

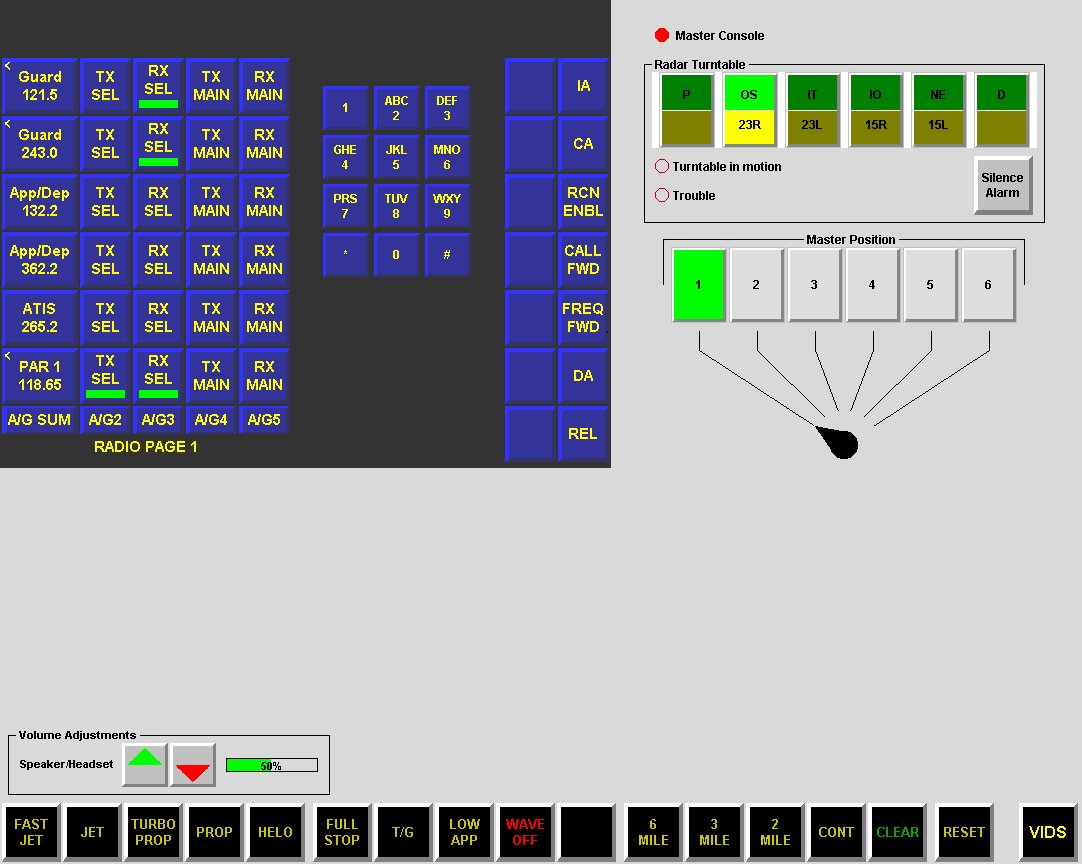

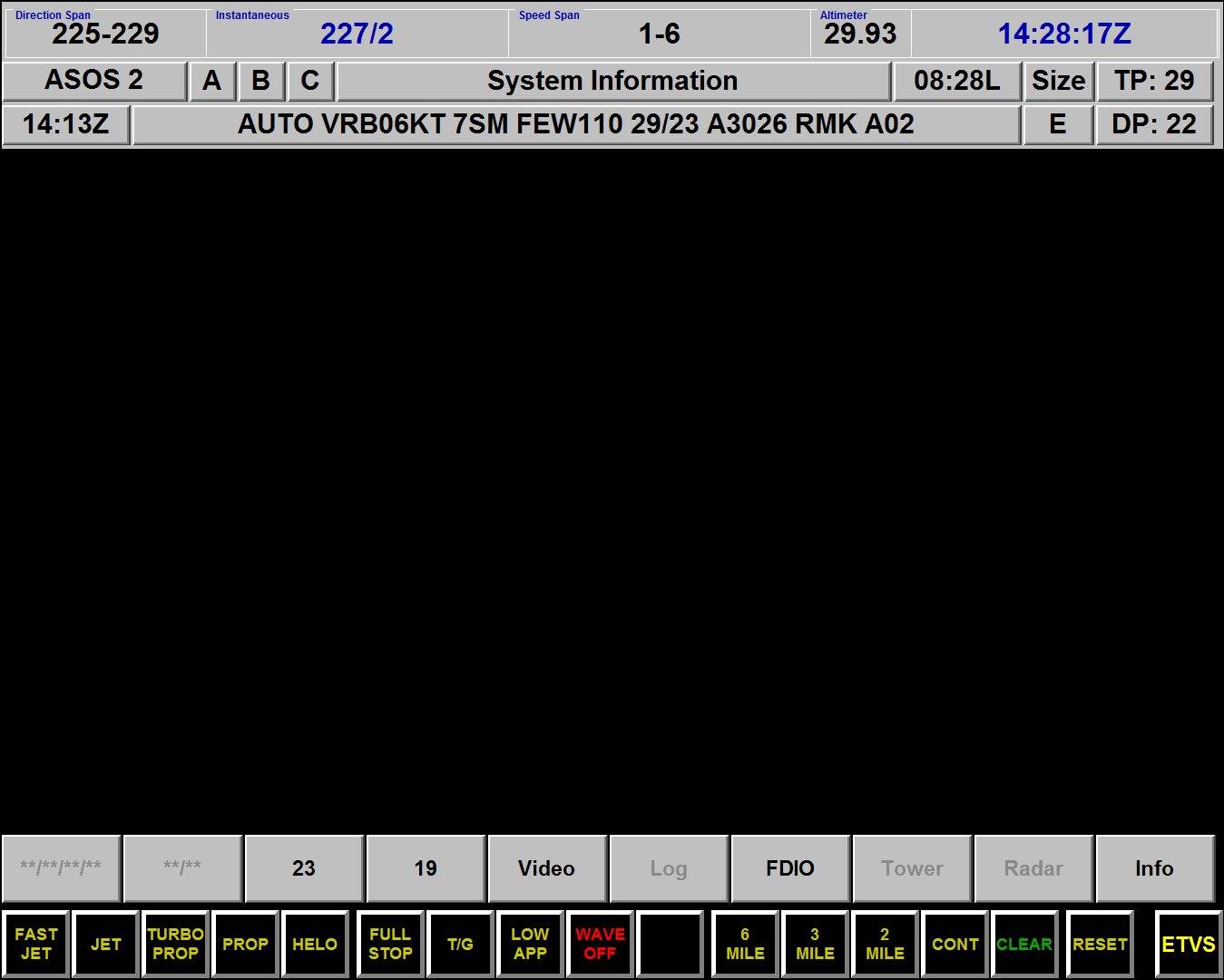

The problem was that these devices could not fit onto the OJ-333 without disturbing the look and feel of the console. To provide training using the external devices, I added a touch screen that would be attached to the top of the OJ-333. The designed hardware solution became as depicted to the left.

The touch screen could emulate as many devices as were desired and could implement site-specific controls for the Improved Precision Approach Radar Trainer (IPART). Almost all of these controls take the form of push buttons. The push buttons operate with a dual push state. The push state of a button can be either pushed or not pushed. When a push button is pushed, the button is drawn as a sunken button. When it is not pushed, it is drawn as a raised button. IPART is wholly responsible for drawing both push states.

However, there were certain limitations. The touch screen width caused the IPART VISCOM to be slightly smaller than in the "real world." The touch screen height caused the IPART VIDS to be slightly smaller than in the "real world." In the IPART Master Servo, the six-position switch and lighted indicators were replaced by six lighted push buttons, operating as radio buttons. In the IPART Radar Turntable, the six interlocked, lighted push buttons were replaced by six lighted push buttons, operating as radio buttons.

The design form of the touch screen became as depicted to the left and right. The ETVS screen is to the left; the VIDS screen is to the right.

Which image is displayed is chosen by the button at the lower right of the two images.

Of technical interest: the IPART software was written in Microsoft C. It the time that IPART was created, there was no support to define graphical user interfaces (as there is today in the Visual Studio Designer). Thus all controls (buttons, knobs, labels, radio buttons, sliders, etc. and their interrupt handlers) required that a graphics API be designed and built.

Console switches include all of the switches and potentiometers found on the OJ-333 console. Switch changes cause changes to the OJ-333 Azimuth-Elevation-Range Indicator as well as to state variables maintained within the IPART process. The state of these switches and potentiometers are detected by Digital Input/Output (DIO) hardware and processed by the DIO drivers. The DIO driver was to interrupt the IPART process when changes to the console switches were detected. Unfortunately, the programmer responsible for the DIO used polling instead.

A push-to-talk (PTT) button is supplied with each headset attached to IPART. The states of the PTT buttons are detected by DIO hardware and processed by the DIO driver. As with the console switches, the DIO driver was to interrupt the IPART process when changes to the PTT buttons were detected. Changes to the PTT buttons cause changes to the state of the voice recognition software. When a PTT button is depressed, the associated microphone is turned on; when a PTT button is released, the associated microphone is turned off.

With the hardware defined, I now had to build a reasonable prototype that would sell IPART to the US Navy ATC community. I based the prototype on the working prototype that was used to demonstrate the viability of the IPART concept. Driven by the realization that most trainees were comfortable playing computer games, I proposed that the new trainer be built in such a way that it would be perceived as a video game. If so viewed, and given a competitive environment, I argued that trainees will train themselves. And so the IPART prototype was implemented.

The simulation model is the central component of and one of the more complex software components of IPART. It consists of:

The simulation model also interacts with almost every other component. For example, when the operator chooses a frequency from the ETVS, the simulation model must insure that the chosen frequency matches that which Approach specified during handoff. If it does not, the text-to-speech component must not generate any responses to the IPART trainee's voice phrases.

The position component determines the x-, y-, and z-coordinates of one or more aircraft (up to six aircraft must be supported) on the surface of a flat earth model. A flat earth model was chosen because the maximum distance of aircraft from the end of a runway is limited to 20 statute miles. Thus, there will be no loss of model accuracy by adopting the flat earth model. However, there is a significant reduction in computational expense by adopting this model.

The motion component obtains its input from the current aircraft motion as modified by commands from the IPART trainee. The motion component feeds the position component with the information needed to update aircraft position. Also, during a session, the trainee may modify the horizontal and/or vertical radar beam directions. These modifications may make a target, once visible, to become invisible, and vice-versa

A number of environmental factors are maintained and modified by the simulation model component: wind direction and speed, temperature, dew point, and altimeter. The competitive level of the current training session controls most of these values. And the difficulty level of a particular session is dependent upon the competitive level of the session. Twelve levels were identified, including "A Perfect Day" to extreme weather to in-flight emergencies to ATC equipment failure. As the IPART trainee become more proficient, more difficult scenarios were to be presented. The simulation model used a random number generator to insure that session activities could not be memorized.

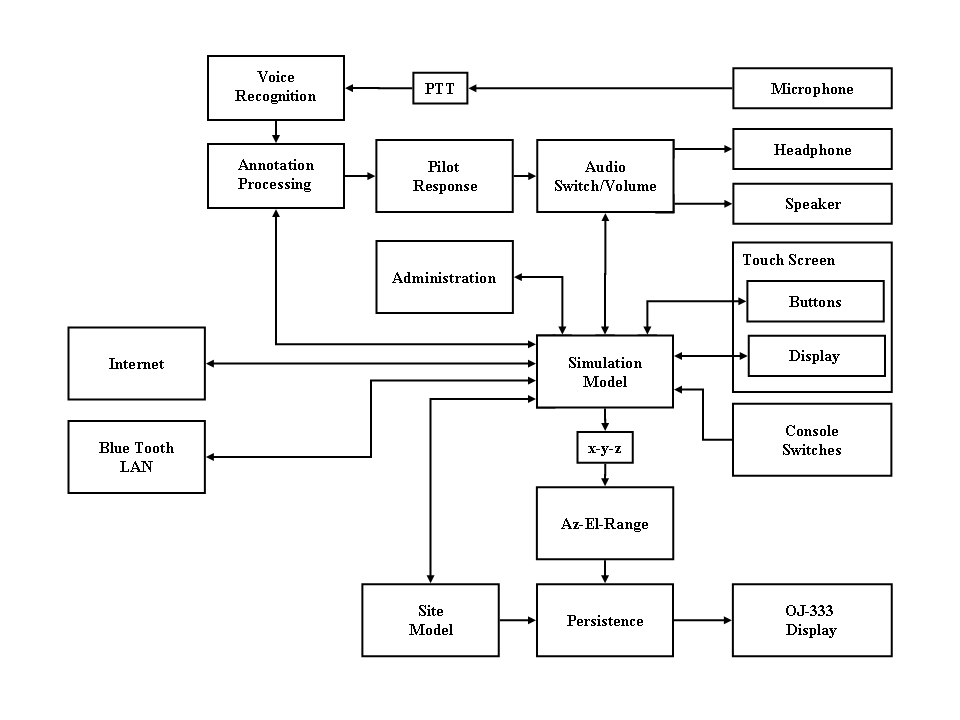

The final prototype software architecture appears as:

In the spring, the US Navy hosted the Navy and Marine Corps Air Traffic Control Symposium. The purpose of the symposium is to provide a vehicle to educate junior Navy and Marine Corps air traffic controllers on technological advances. This was the perfect place to test IPART. The prototype, that only simulated "A Perfect Day", was set up at the conference site and ATCs were invited to try out the trainer. All were impressed. I can only say that my convictions were supported when one of the Air Traffic Controllers returned and asked to "play the game again."

Today, IPART can be found at US Navy and Marine Corps air stations, world-wide.